As Truth-in-Lending laws are celebrating half a century of failure, and as consumers—especially those with low income—continue to make disastrous credit decisions, lawmakers are looking to reboot the disclosure paradigm. Energized by insights from behavioral economics, Twenty-First Century regulators are rapidly discarding the old idea of “comprehensive” disclosure, developing instead psychologically-smart, graphically-appealing, and timely-relevant compact disclosure templates. But now, a new study by Seira et al. has put to the test an array of these smart disclosures. And the results are devastating.

Smart disclosures seem to make perfect sense. If consumers need information to make good decisions, it should be delivered to them in a user-friendly manner. Smart disclosures should provide salient “total cost of credit” scores. They should “nudge” debtors to avoid massive debt, for example by showing them the real cost of making only minimum monthly payments. They should harness “peer effects” by warning people when their debt is above average for similar consumers. And they should arrive via eye-popping easy-to-understand media.

Some of these techniques show modest promise in the lab. But would they work in the real lives of consumers? A few years ago I co-wrote a book on this topic (Ben-Shahar and Schneider, 2014). On the basis of evidence from prior rounds of disclosure reform and a diagnosis why disclosures failed, we predicted that the new round of smart disclosures would not bring improvements. Not surprisingly, our book did not slow down the legions of enthusiastic disclosurites. Hopes were high that behaviorally-informed disclosure regulations would make successful transition from the lab to the street.

Some disappointing evidence began to arrive, primarily in an excellent paper by Agarwal et al. (2015), showing that the 2009 CARD Act reform requiring the months-to-pay disclosure nudge had no meaningful effect. But the recently published paper by Seira, Elizondo, and Laguna-Müggenburg is truly a game changer. It quashes the hope that the new paradigm of disclosure would succeed where its predecessors failed.

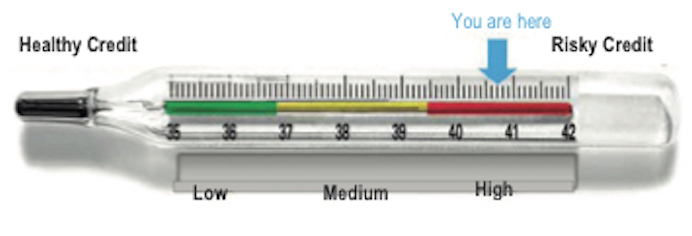

The paper reports a large-scale random-assignment experiment conducted in Mexico. With the cooperation of a big bank, new disclosures were sent to tens of thousands of highly indebted credit card holders. These disclosures were designed by the researchers to prompt the consumers to reduce their borrowing and their debt balances. The disclosure were based on the most up to date techniques: salient display of the (personalized) interest rate, a months-to-pay nudge, a “social comparison” informing consumer when their debt was above (or below) average for like customers, pictures suggesting the consumer was in high-risk terrain (like the one depicted below), and even a warning against overconfidence.

Copyright © American Economic Association 2017. Reprinted with permission of the AEA and the authors.

There was every reason to expect some, if not major, effects. The recipients were highly indebted, initially unaware of their interest rate, and overconfident as to their ability to pay their debt. They were paying large portions of their income towards the finance charges. If only they read the disclosures, they could have switched to much cheaper debt (for example, by transferring balances).

But on every measure of response, the innovative disclosures were a miserable letdown. Even when posted saliently, the interest rate disclosure did not change levels of debt, rates of delinquency, or account switching. The months-to-pay disclosure, designed to urge overly optimistic people to make more than the minimum payment, had not effect on debt. Ironically, it led to increased rates of default—perhaps by instilling a sense of apathy among debtors, realizing the futility of trying pay back incrementally.

The peer comparison disclosures had an especially interesting effect. Telling people (half the population) they are higher-than-average risk caused a small decrease in debt. That’s good. But the flip side was that telling the other half that they are lower than average risk caused a corresponding increase in debt. Overall debt payments under this disclosure intervention actually went down by about 10 percent—the opposite of the intended consequence.

The main lesson, the authors conclude, is that “all treatments have zero or tiny effects in all outcomes measured.” They explain that “this zero effect is quite precise and robust across subsamples” and “not due to low statistical power.” Where non-zero effects were found, they “were relatively small and short-lived, lasting only one or two months.”

This is an important paper. It tested the most widely advocated interventions where they were most likely to work and on people most direly in need of them. It was not a make-believe synthetic scenario in a social science lab, but rather in a real market intervention. It assigned treatments and control randomly, and collected observations from more than 160,000 participants. The null effect is therefore best interpreted as affirmative proof that these disclosures had no impact on any important consumer decision or welfare measure.

Why did these smart disclosure fail? Ultimately, because the decisions consumers face are complex. You can simplify the disclosures, but not the problems. Are low-income consumers, who carry large credit card debts, who receive endless notices and prompts from numerous vendors, truly able to read and understand every mailed notice from the bank? Even if the consumers somehow honed in on this specific anguish—how to reduce credit card debt—so much more information would be needed to make good decisions.

More fundamentally, the failure of the smart disclosure reminds us that the problem for most indebted consumers is not information. People know intuitively when they borrow too much, even if they cannot quantify this intuition. The problem for low-income borrowers is, well, . . . poverty. They borrow to pay towards urgent needs. Seira et al. provide a timely reminder that mandated disclosure—including the most methodologically sound version—is not a panacea.